Duplicate Photo Finder is designed for the average computer user. The program is specifically designed to do one thing, remove duplicate photos and it does it very well.Īnyone who is frustrated with wasted disk space due to duplicate photos. DPF is not the Jack of all trade when it comes to duplicate file removal. It's user-friendly interface makes it a snap to use as it requires only basic Windows skills. The program is aimed at the average computer user. Key features include: Batch delete, batch move, and popular file format support (JPG, GIF, BMP, TIF, and PNG).ĭuplicate Photo Finder simplifies the process of removing large quantities of duplicate photos. Once the search is complete, duplicates are grouped together and displayed in a user friendly window. DPF will very accurately locate duplicate photos, even if they have different file names. My Computers janey6 Posts : 93 Windows10 Thread Starter 3 Thanks. It's just a wild guess at this point, but maybe worth investigating. You might try doing that and see if that causes the opposite set of files to be marked as duplicates. Each photo is scanned and compared to the rest. There is mention of promoting the selected files into reference files.

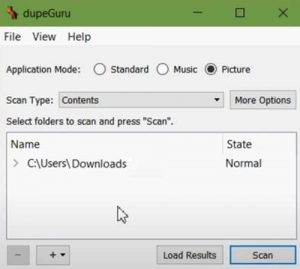

Setting reference folder in dupeguru software#Simply indicate which folder(s) you want to search and the software goes to work. Once the search is complete, duplicates are grouped together and displayed in a user friendly window.ĭuplicate Photo Finder (DPF) is a Windows based program that helps you locate and remove duplicate photos. Each photo is scanned and compared to the rest.

And BTRFS is natively created under linux.Duplicate Photo Finder (DPF) is a Windows based program that helps you locate and remove duplicate photos. Then this saves performance, but when host has nothing to do, you run periodically tool for make deduplication. ZFS has online deduplication, that cause slow down writes, because all is calculated online. BTRFS is younger, but last time very good supported. ZFS is older and more mature, unfortunately only under Solaris and OpenSolaris (unfortunately strangled by oracle). Other way, you should change linux to opensolaris, it natively supports ZFS :) What is very nice with ZFS is, this works both as filesystem, and volumen manager similar to LVM. but if you do configuration and don't change it, all works properly. Under linux you should take care for ZFS because not all work as it should, specialy when you manage filesystem, make snapshot etc. In real work this is replacement for RAM :) This can be very fast rotate disks or SSD disks. Of course, you can add to storage any cache disks. Setting reference folder in dupeguru full#after this you can off deduplication and restore full performance. If you see any data should be deduplicated, you simply set deduplication on, rewrite some file to any temporary and finally replace. This is described in documentation.īut you can online set on/off deduplication on volume. This is, why writing files to ZFS volume mounted with FUSE, cause dramatically slow performance. Setting reference folder in dupeguru Offline#Other way is time when data are deduplicated, this can be online (zfs) or offline (btrfs). This means, the same contents that repeats in the same file can be deduplicated. I don't understand, why linux doesn't support as drivers, it is way for many other operating systems / kernels.įile systems supports deduplication by 2 ways, deduplicate files, or blocks. Setting reference folder in dupeguru install#Then you must patch kernel, and next install zfs tools for managament. If you want native support, look at the page. This is available as FUSE, but in this way it's very slow. Setting reference folder in dupeguru pro#Look at the page:, specialy at the table Features and column data deduplication. My Recommendation for dupeGuru Add Video or Image All 5 Pros 3 Cons 1 Specs Top Pro Cross-platform Accomplish the same task on Windows, Linux, or OS X using a single tool. You can use BTRFS (very popular last time), OCFS or like this. Inode number is then saved, and original file content is destroyed (replaced) for all hardlinked names.īetter way is deduplication on filesystem layer. It is enough, any user put other file in the same name. You should prevent modification that file, because any user can damage file to other. This cause, all file names linked to the same inode, have the same access rights. Only file names are different because this is stored in directory structure, and other points to INODE properties. Notice, owner, group, mode, extended attributes, time and ACL (if you use this) is stored in INODE. If you'll do hardlinks, pay attention on rights on that file.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed